安装

要安装一个非常轻量级的Transformers库,您可以执行以下步骤:

- 1、打开终端或命令提示符。

- 2、运行以下命令来安装Transformers库:

pip install transformers

这将使用pip工具从Python Package Index(PyPI)下载并安装Transformers库。请确保您的计算机上已经安装了pip。

然后,您可以在Python代码中导入Transformers库:

import transformers

这样就可以使用Transformers库中提供的功能了。

如果您想安装包含几乎所有用例所需依赖项的开发版本,可以执行以下步骤:

- 1、打开终端或命令提示符。

- 2、运行以下命令来安装Transformers库及其相关依赖项:

pip install transformers[sentencepiece]

这将安装Transformers库的开发版本,并包括用于处理句子拆分的SentencePiece依赖项。注意,这个版本可能比轻量级版本更大,因为它包含了更多的依赖项。

一、模型简介 Transformer models

Transformers库中的pipeline函数是一个非常方便的工具,可以直接使用预训练模型进行文本处理。下面是一些pipeline的简单示例:

1、情感分析(Sentiment Analysis):

from transformers import pipeline

classifier = pipeline("sentiment-analysis")

result = classifier("I've been waiting for a HuggingFace course my whole life.")

print(result)

输出:

[{'label': 'POSITIVE', 'score': 0.9598047137260437}]

您还可以传递多个句子进行情感分析:

results = classifier([

"I've been waiting for a HuggingFace course my whole life.",

"I hate this so much!"

])

print(results)

输出:

[{'label': 'POSITIVE', 'score': 0.9598047137260437},

{'label': 'NEGATIVE', 'score': 0.9994558095932007}]

2、其他可用的pipeline:

除了情感分析,Transformers库还提供了其他一些可用的pipeline,如特征提取(feature-extraction)、命名实体识别(ner)、问答(question-answering)、摘要(summarization)、文本生成(text-generation)、翻译(translation)等。您可以根据需要选择适合您任务的pipeline。

例如,使用zero-shot-classification进行分类:

classifier = pipeline("zero-shot-classification")

result = classifier(

"I've been waiting for a HuggingFace course my whole life.",

candidate_labels=["positive", "negative"]

)

print(result)

输出:

{'sequence': "I've been waiting for a HuggingFace course my whole life.",

'labels': ['positive', 'negative'],

'scores': [0.9943647985458374, 0.00563523546639061]}

这些pipeline的具体例子可见:Transformer models - Hugging Face Course

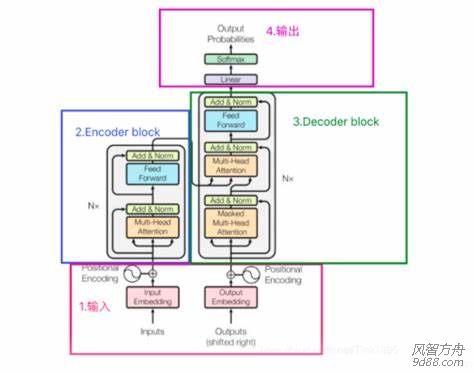

3、各种任务的代表模型

| Model |

Examples |

Tasks |

| Encoder 编码器模型 |

ALBERT, BERT, DistilBERT, ELECTRA, RoBERTa |

Sentence classification, named entity recognition, extractive question answering 适合需要理解完整句子的任务,例如句子分类、命名实体识别(以及更一般的单词分类)和提取式问答 |

| Decoder 解码器模型 |

CTRL, GPT, GPT-2, Transformer XL |

Text generation 解码器模型的预训练通常围绕预测句子中的下一个单词。这些模型最适合涉及文本生成的任务 |

| Encoder-decoder 序列到序列模型 |

BART, T5, Marian, mBART |

Summarization, translation, generative question answering 序列到序列模型最适合围绕根据给定输入生成新句子的任务,例如摘要、翻译或生成式问答。 |

本节测试:Transformer models - Hugging Face Course

二、 使用 Using Transformers

1、Pipeline 背后的流程

在使用Pipeline时,背后的流程包括三个主要步骤:分词器(Tokenizer)、模型(Model)和后处理(Post-Processing)。通过这三个步骤的组合,Pipeline能够接收原始文本作为输入,并使用预训练模型进行处理,最终生成相应的输出。这种流程的设计使得使用Transformers库进行文本处理变得简单且高效。

1)分词器(Tokenizer)

分词器用于将输入的原始文本分割成单词或子词的序列,以便模型能够理解和处理。它将文本转换为模型可以接受的输入格式。在Transformers库中,可以使用AutoTokenizer类及其from_pretrained()方法来实例化一个适用于特定预训练模型的分词器。

与其他神经网络一样,Transformer 模型不能直接处理原始文本,故使用分词器进行预处理。使用AutoTokenizer类及其from_pretrained()方法。

from transformers import AutoTokenizer

checkpoint = "distilbert-base-uncased-finetuned-sst-2-english"

tokenizer = AutoTokenizer.from_pretrained(checkpoint)

若要指定我们想要返回的张量类型(PyTorch、TensorFlow 或普通 NumPy),我们使用return_tensors参数

raw_inputs = [

"I've been waiting for a HuggingFace course my whole life.",

"I hate this so much!",

]

inputs = tokenizer(raw_inputs, padding=True, truncation=True, return_tensors="pt")

print(inputs)

PyTorch 张量的结果:

输出本身是一个包含两个键的字典,input_ids和attention_mask。

{

'input_ids': tensor([

[ 101, 1045, 1005, 2310, 2042, 3403, 2005, 1037, 17662, 12172, 2607, 2026, 2878, 2166, 1012, 102],

[ 101, 1045, 5223, 2023, 2061, 2172, 999, 102, 0, 0, 0, 0, 0, 0, 0, 0]

]),

'attention_mask': tensor([

[1, 1, 1, 1, 1, 1, 1, 1, 1, 1, 1, 1, 1, 1, 1, 1],

[1, 1, 1, 1, 1, 1, 1, 0, 0, 0, 0, 0, 0, 0, 0, 0]

])

}

2)Model

模型是预训练的Transformer模型,它接收分词器处理后的输入,并对其进行处理以生成相应的输出。根据不同的任务,可以选择不同的预训练模型,如BERT、GPT等。在Pipeline中,模型会自动从Hugging Face模型库中下载和加载预训练模型。

Transformers 提供了一个AutoModel类,它也有一个from_pretrained()方法:

from transformers import AutoModel

checkpoint = "distilbert-base-uncased-finetuned-sst-2-english"

model = AutoModel.from_pretrained(checkpoint)

如果我们将预处理过的输入提供给我们的模型,我们可以看到:

outputs = model(**inputs)

print(outputs.last_hidden_state.shape)

# 输出

# torch.Size([2, 16, 768])

Transformers 中有许多不同的架构可用,每一种架构都围绕着处理特定任务而设计,清单:

Model (retrieve the hidden states)

ForCausalLM

ForMaskedLM

ForMultipleChoice

ForQuestionAnswering

ForSequenceClassification

*ForTokenClassification

and others

3)Post-Processing

后处理步骤用于根据任务的要求对模型的输出进行处理和解释。例如,对于情感分析任务,后处理步骤可能会将模型输出的概率分数转换为标签(如"positive"、"negative");对于问答任务,后处理步骤可能会从模型输出中提取答案。后处理步骤可以根据具体任务的需求进行自定义。

模型最后一层输出的原始非标准化分数。要转换为概率,它们需要经过一个SoftMax层(所有 Transformers 模型都输出 logits,因为用于训练的损耗函数一般会将最后的激活函数(如SoftMax)与实际损耗函数(如交叉熵)融合 。

import torch

predictions = torch.nn.functional.softmax(outputs.logits, dim=-1)

print(predictions)

2. Models

1)创建Transformer

from transformers import BertConfig, BertModel

# Building the config

config = BertConfig()

# Building the model from the config

model = BertModel(config)

2)不同的加载方式

from transformers import BertModel

model = BertModel.from_pretrained("bert-base-cased")

3)保存模型

model.save_pretrained("directory_on_my_computer")

4)使用Transformer model

sequences = ["Hello!", "Cool.", "Nice!"]

encoded_sequences = [

[101, 7592, 999, 102],

[101, 4658, 1012, 102],

[101, 3835, 999, 102],

]

import torch

model_inputs = torch.tensor(encoded_sequences)

3. Tokenizers

1)Loading and saving

from transformers import BertTokenizer

tokenizer = BertTokenizer.from_pretrained("bert-base-cased")

tokenizer("Using a Transformer network is simple")

# 输出

'''

{'input_ids': [101, 7993, 170, 11303, 1200, 2443, 1110, 3014, 102],

'token_type_ids': [0, 0, 0, 0, 0, 0, 0, 0, 0],

'attention_mask': [1, 1, 1, 1, 1, 1, 1, 1, 1]}

'''

# 保存

tokenizer.save_pretrained("directory_on_my_computer")

2)Tokenization

from transformers import AutoTokenizer

tokenizer = AutoTokenizer.from_pretrained("bert-base-cased")

sequence = "Using a Transformer network is simple"

tokens = tokenizer.tokenize(sequence)

print(tokens) # 输出 : ['Using', 'a', 'transform', '##er', 'network', 'is', 'simple']

# 从token 到输入 ID

ids = tokenizer.convert_tokens_to_ids(tokens)

print(ids) # 输出:[7993, 170, 11303, 1200, 2443, 1110, 3014]

3) Decoding

decoded_string = tokenizer.decode([7993, 170, 11303, 1200, 2443, 1110, 3014])

print(decoded_string) # 输出:'Using a Transformer network is simple'

4. 处理多个序列 Handling multiple sequences

1) 模型需要一批输入 Models expect a batch of inputs

将数字列表转换为张量并将其发送到模型:

import torch

from transformers import AutoTokenizer, AutoModelForSequenceClassification

checkpoint = "distilbert-base-uncased-finetuned-sst-2-english"

tokenizer = AutoTokenizer.from_pretrained(checkpoint)

model = AutoModelForSequenceClassification.from_pretrained(checkpoint)

sequence = "I've been waiting for a HuggingFace course my whole life."

tokens = tokenizer.tokenize(sequence)

ids = tokenizer.convert_tokens_to_ids(tokens)

input_ids = torch.tensor([ids])

print("Input IDs:", input_ids)

output = model(input_ids)

print("Logits:", output.logits)

# 输出

'''

Input IDs: [[ 1045, 1005, 2310, 2042, 3403, 2005, 1037, 17662, 12172, 2607, 2026, 2878, 2166, 1012]]

Logits: [[-2.7276, 2.8789]]

'''

2) 填充输入 Padding the inputs

model = AutoModelForSequenceClassification.from_pretrained(checkpoint)

sequence1_ids = [[200, 200, 200]]

sequence2_ids = [[200, 200]]

batched_ids = [

[200, 200, 200],

[200, 200, tokenizer.pad_token_id],

]

print(model(torch.tensor(sequence1_ids)).logits)

print(model(torch.tensor(sequence2_ids)).logits)

print(model(torch.tensor(batched_ids)).logits)

# 输出

'''

tensor([[ 1.5694, -1.3895]], grad_fn=<AddmmBackward>)

tensor([[ 0.5803, -0.4125]], grad_fn=<AddmmBackward>)

tensor([[ 1.5694, -1.3895],

[ 1.3373, -1.2163]], grad_fn=<AddmmBackward>)

'''

5. 总结 Putting it all together

我们已经探索了分词器的工作原理,并研究了分词 tokenizers、转换为输入 ID conversion to input IDs、填充 padding、截断 truncation和注意力掩码 attention masks。Transformers API 可以通过高级函数为我们处理所有这些。

from transformers import AutoTokenizer

checkpoint = "distilbert-base-uncased-finetuned-sst-2-english"

tokenizer = AutoTokenizer.from_pretrained(checkpoint)

sequence = "I've been waiting for a HuggingFace course my whole life."

model_inputs = tokenizer(sequence)

# 可以标记单个序列

sequence = "I've been waiting for a HuggingFace course my whole life."

model_inputs = tokenizer(sequence)

# 还可以一次处理多个序列

sequences = ["I've been waiting for a HuggingFace course my whole life.", "So have I!"]

model_inputs = tokenizer(sequences)

# 可以根据几个目标进行填充

# Will pad the sequences up to the maximum sequence length

model_inputs = tokenizer(sequences, padding="longest")

# Will pad the sequences up to the model max length

# (512 for BERT or DistilBERT)

model_inputs = tokenizer(sequences, padding="max_length")

# Will pad the sequences up to the specified max length

model_inputs = tokenizer(sequences, padding="max_length", max_length=8)

# 还可以截断序列

sequences = ["I've been waiting for a HuggingFace course my whole life.", "So have I!"]

# Will truncate the sequences that are longer than the model max length

# (512 for BERT or DistilBERT)

model_inputs = tokenizer(sequences, truncation=True)

# Will truncate the sequences that are longer than the specified max length

model_inputs = tokenizer(sequences, max_length=8, truncation=True)

# 可以处理到特定框架张量的转换,然后可以将其直接发送到模型。

sequences = ["I've been waiting for a HuggingFace course my whole life.", "So have I!"]

# Returns PyTorch tensors

model_inputs = tokenizer(sequences, padding=True, return_tensors="pt")

# Returns TensorFlow tensors

model_inputs = tokenizer(sequences, padding=True, return_tensors="tf")

# Returns NumPy arrays

model_inputs = tokenizer(sequences, padding=True, return_tensors="np")

Special tokens

分词器在开头添加特殊词[CLS],在结尾添加特殊词[SEP]。

sequence = "I've been waiting for a HuggingFace course my whole life."

model_inputs = tokenizer(sequence)

print(model_inputs["input_ids"])

tokens = tokenizer.tokenize(sequence)

ids = tokenizer.convert_tokens_to_ids(tokens)

print(ids)

# 输出

'''

[101, 1045, 1005, 2310, 2042, 3403, 2005, 1037, 17662, 12172, 2607, 2026, 2878, 2166, 1012, 102]

[1045, 1005, 2310, 2042, 3403, 2005, 1037, 17662, 12172, 2607, 2026, 2878, 2166, 1012]

'''

print(tokenizer.decode(model_inputs["input_ids"]))

print(tokenizer.decode(ids))

# 输出

'''

"[CLS] i've been waiting for a huggingface course my whole life. [SEP]"

"i've been waiting for a huggingface course my whole life."

'''

# 总结:从分词器到模型

import torch

from transformers import AutoTokenizer, AutoModelForSequenceClassification

checkpoint = "distilbert-base-uncased-finetuned-sst-2-english"

tokenizer = AutoTokenizer.from_pretrained(checkpoint)

model = AutoModelForSequenceClassification.from_pretrained(checkpoint)

sequences = ["I've been waiting for a HuggingFace course my whole life.", "So have I!"]

tokens = tokenizer(sequences, padding=True, truncation=True, return_tensors="pt")

output = model(**tokens)

本节测试:Transformer models - Hugging Face Course